The former deputy PM – now an executive at the company that owns Facebook and Instagram – says the lines between human and “synthetic content” is becoming “blurred” – as the firm said it planned to label all AI images on its platforms.

Meta, which also owns the Threads social media site, has already been placing “Imagined with AI” labels on photorealistic images created using its own Meta AI feature.

The tech giant said it is now building “industry-leading tools” that will allow it to identify invisible markers on images generated by artificial intelligence that have come from other sites such as Google, OpenAI, Microsoft or Adobe.

Meta has said it will roll out the labelling on Facebook, Instagram and Threads in the coming months.

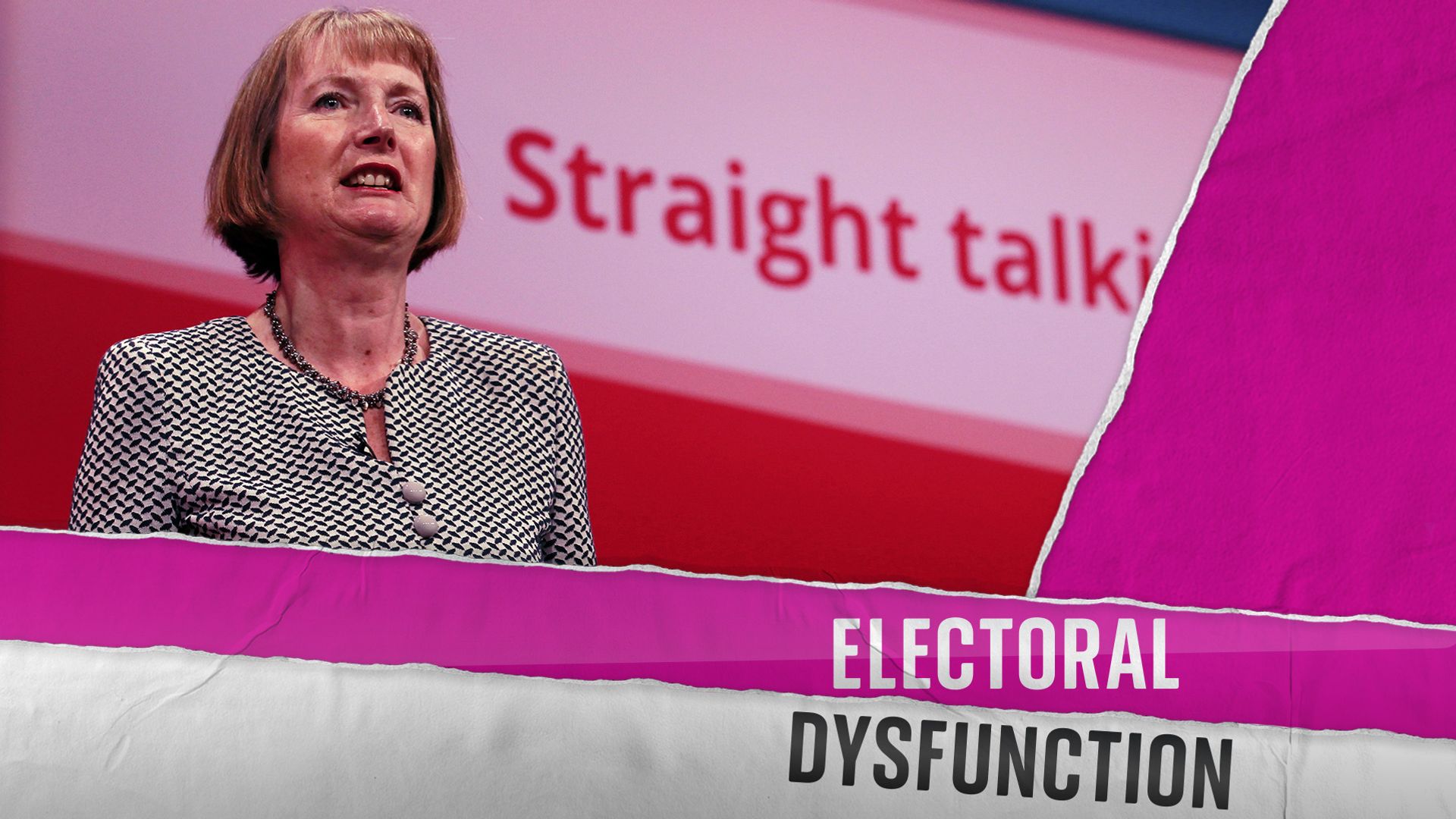

Sir Nick Clegg, who is now Meta’s president of global affairs, wrote in a statement that the move comes during a year when a “number of important elections are taking place around the world”.

He added: “During this time, we expect to learn much more about how people are creating and sharing AI content, what sort of transparency people find most valuable, and how these technologies evolve. What we learn will inform industry best practices and our own approach going forward.”

Sir Nick said the move is important at a time when “the difference between human and synthetic content” is becoming “blurred”.

Meta says it has been working with “industry partners on common technical standards for identifying AI content”, adding that it will be able to label AI-generated images when its technology detects “industry standard indicators”.

The company says the labels will come in “all languages”.

Science and technology editor

Well first, it’s become impossible to ignore.

By one recent estimate, since 2022 alone 15 billion images have been generated by AI and uploaded to the internet. Like much of the content online, most of them fit into the harmless, even silly cute kitten, sci-fi, anime variety.

But a large number are harmful. Things like fake explicit images of public or private individuals uploaded without their consent, or politically motivated misinformation designed to manipulate the truth.

But the other reason for the reaction is companies like Meta know they are going to be forced to do something about it.

The UK passed the Online Safety Act last year which makes uploading fake explicit images of a person without their consent a crime. Lawmakers in the US last week told social media bosses that they were failing in their duty to keep users safe online and that laws to compel them to do more were now the only course of action.

Will Meta’s announcement make a difference? Yes, in that it will likely compel their rivals to follow suit and certainly will help make it clearer what images are AI generated and which aren’t.

But several research teams have shown that digital watermarking – even watermarks buried in the metadata of an image – can be removed with little expertise. Even Meta admits the technology isn’t perfect.

The real test will be whether we see, in the coming months, a decrease in the explosion of harmful fake images appearing online. And that’s probably going to be easier said than done.

While a superstar like Taylor Swift might be able to pressure Big Tech into taking down illegal images of her – the same can’t be said for the 3.5 billion users of one Meta platform or other.

If that doesn’t happen, the next test will be whether we see large and powerful tech companies in court over the issue. Some predict only hitting big tech in their pockets will really bring about change.

Sir Nick has said it’s not yet possible for Meta to identify all AI-generated content – with those who produce the images able to strip out invisible markers.

He added: “We’re working hard to develop classifiers that can help us to automatically detect AI-generated content, even if the content lacks invisible markers. At the same time, we’re looking for ways to make it more difficult to remove or alter invisible watermarks.”

Sir Nick said this part of Meta’s work is important because the use of AI is “likely to become an increasingly adversarial space in the years ahead”.

“People and organisations that actively want to deceive people with AI-generated content will look for ways around safeguards that are put in place to detect it. Across our industry and society more generally, we’ll need to keep looking for ways to stay one step ahead,” he said.

Meta also plans to add a feature to its platform that will allow people to disclose when they are sharing AI-generated content so the company can add a label to it.

Read more:

Meta boss grilled over child exploitation concerns

Facebook turns 20: From Zuckerberg’s dormitory to a $1trn company

Eight AI-generated images that have caught people out

Taylor Swift targeted in AI images

AI images have proven controversial in recent months – with many of them so realistic users are often unable to tell they are not real.

In January, deepfake images of pop superstar Taylor Swift, which were believed to have been made using AI, were spread widely on social media.

Please use Chrome browser for a more accessible video player

US President Biden’s spokesperson said the sexually explicit images of the star were “very alarming”.

White House Press Secretary Karine Jean-Pierre said social media companies have “an important role to play in enforcing their own rules”, as she urged Congress to legislate on the issue.

A royal reunion that was not all it seemed

In the UK, a slideshow of eight images appearing to show Prince William and Prince Harry at the King’s coronation spread widely on Facebook in 2023, with more than 78,000 likes.

One of them showed a seemingly emotional embrace between William and Harry after reports of a rift between the brothers.

Be the first to get Breaking News

Install the Sky News app for free

However, none of the eight images were genuine.

Meanwhile, an AI-generated mugshot of Donald Trump when he was formally booked on 13 election fraud charges fooled many people around the world in 2023.